-

Ansys is committed to setting today's students up for success, by providing free simulation engineering software to students.

-

Ansys is committed to setting today's students up for success, by providing free simulation engineering software to students.

-

Ansys is committed to setting today's students up for success, by providing free simulation engineering software to students.

-

Contact Us -

Careers -

Students and Academic -

For United States and Canada

+1 844.462.6797

Join Us for Simulation World 2024

The global simulation event designed to inspire, equip, and empower you to innovate.

May 14-16, 2024

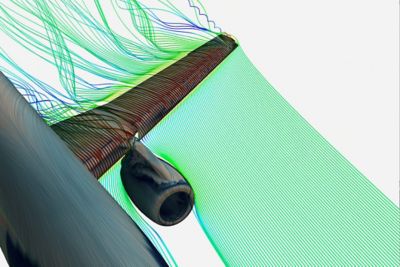

NASCAR Simulates Safety with Next Gen Car

See how simulation enables NASCAR to continuously evolve its Next Gen vehicle designs to mitigate risks on the track.

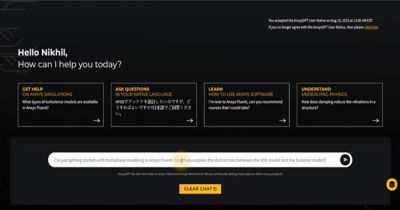

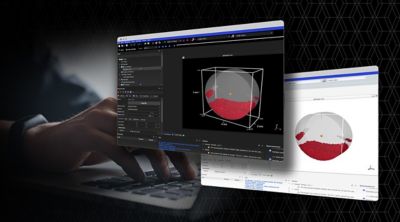

Elevating Interface and Experience: Ansys 2024 R1

New user experience enhances collaborative engineering environments, making powerful multiphysics solutions more accessible while simultaneously amplifying the benefits of AI-driven digital engineering solutions.

The Intersection of AI and Simulation Technology

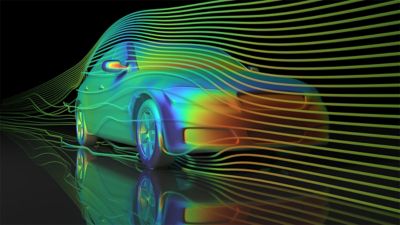

Since the mid-20th century, scientists and engineers have tested, validated, and improved their designs with simulation. Simulation software generates synthetic data, and AI combines these learnings into real-time insights to fill in the gaps of what’s possible, making simulation faster.

See What Ansys Can Do For You

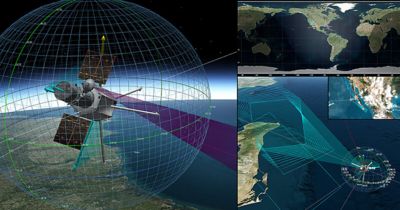

Solving the Unsolvable

Without simulation, there are no autonomous vehicles. No 5G networks. No space exploration. Ansys multiphysics software solutions and digital mission engineering help companies innovate and validate like never before.

The Latest From Ansys

See What Ansys Can Do For You

See What Ansys Can Do For You

Contact us today

Thank you for reaching out!

We’re here to answer your questions and look forward to speaking with you. A member of our Ansys sales team will contact you shortly.